Getting from A-B: How split testing can strengthen your small business marketing efforts

Do you know what kinds of offers your customers are likely to respond to? Can you pinpoint the optimal day/time to hit ‘send’ on your email campaigns? Have you identified your website’s “exit pages” (ie the last ones your visitors typically visit before they click away) and are working on a plan to improve engagement?

Do you know what kinds of offers your customers are likely to respond to? Can you pinpoint the optimal day/time to hit ‘send’ on your email campaigns? Have you identified your website’s “exit pages” (ie the last ones your visitors typically visit before they click away) and are working on a plan to improve engagement?

Informative and extremely cost-effective, A-B testing (or split testing) may just provide the insights small business owners have been longing for. It allows you to improve conversion rates (and increase revenue) by observing the reaction of your customers to slight differences in your marketing and communications efforts.

An essential ingredient in small business marketing

You may be thinking, “That’s great, but I don’t have time to test my marketing campaigns – I barely have time to run them in the first place!”. I get it. It sounds like another heavy burden that will sink to the bottom of your to-do list. The pay-offs, however, are worth the extra elbow grease. I mentor our Tenfold coaching clients to conduct A-B testing because they don’t have room for wasted time or effort in their marketing budgets; every dollar of their marketing spend needs to be working for them as hard as it can.

Numbers will talk to those who listen

The data made available through online marketing and communication channels has changed the way we can track consumer behaviour. Business owners are no longer forced to cross their fingers and hope that their best guess is working for their customers.

Using tools like Google Analytics, you can pitch different campaign elements against each other and determine which will deliver a better return on investment. The key (as Hans Rosling once wisely said) is to make the journey from “numbers to information to understanding”. A-B testing can help you make that journey. Because the approach demands that you prove or disprove your theories (which are based on your own expertise and experience), you end up with meaningful information that improves your understanding about what best meets your customers’ needs.

Put it to the test

Small business owners can make great use of split testing on any number of marketing activities and tools including:

- Headlines – in blogs, web copy, emails etc

- Offers or promotions – eg 50% off vs a ‘buy 2 for the price of 1’

- Look and feel – logo, fonts, colours, product image size/placements etc

- Call-to-action – eg “call now” vs “email now”

- Email campaigns – time of day, subject lines, element placement etc

- Newsletter signup and engagement

- Web pages – Landing page activity, bounce rates, exit rates etc

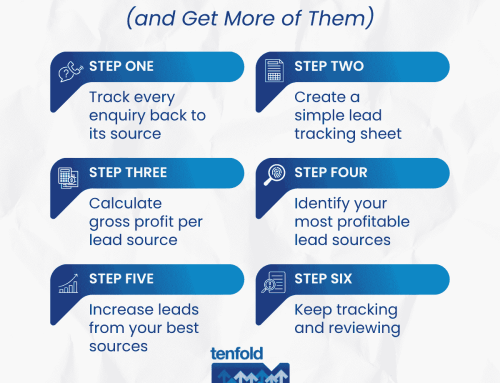

Testing…testing… 123: the small business owner’s guide to A-B testing

1. Get set up with your chosen testing tool

For more detailed information you may need to read through the process of each individual tool. There are many great ones out there but these are a few that rate a mention:

- Google Analytics

- Optimizely

- Unbounce

- VWO

- Mailchimp (mainly for newsletters and emails)

2. Establish a ‘baseline’ for your data

This is important for two reasons:

a) It helps you to prioritise what to test. For example, it may be your instinct to test your landing pages, but the data may indicate that your customers “exit” most at the shopping cart on your website without completing the purchase. A little soul searching may reveal that you are forcing visitors to perform a dummy purchase to find out more about shipping. Therefore, you may need to include the shipping info earlier in the sales process to improve usability for your customers.

b)It gives you a starting point for your testing. You won’t be able to measure increases or decreases in activity on an element if you haven’t ‘taken its temperature’ first.

3. Be clear on your goal

This is no time to think “big”. You will need to be keep your objective specific – think “I want to test the difference between placing the text first or picture first in our direct email campaign” vs “I want to test our entire new website design”.

4. Turn that data into ‘information’

What ‘problem’ is indicated by the numbers – eg not enough customers in our database are clicking through to our website from our direct email campaigns.

5. Come up with your best guess ‘reason why’ (ie your hypothesis)

This will need to be ‘testable’ – eg you hypothesise that the reason that customers are not clicking through to the website from your email is because the ‘buy now’ link in your current template is not easily visible (it is incorporated in the text and you think a dedicated button would be more effective). You would plan to run a test comparing the existing email (version A) with a version including the new ‘buy now’ button (version B).

6. Setup and run the test

How you do this will depend on which tool you use, but basically it will collect information regarding activity/uptake on elements A and B.

7. Analyse the results

If you have collected enough data so that the results reflect a ‘pattern’ (beyond chance), then you can go ahead and see if there is a clear winner. Did you prove or disprove your hypothesis?

8. Test again

This is a game of small moves and elimination. You might prove that you got better results from your original version (A). Right, so your test proved that version B didn’t work. You will need to test again with a new ‘version B’.

9. Implement any changes that cause an uplift in results!

Tips for better testing

- Be consistent. You will need to keep all elements other than the one you are testing exactly the same. Differences in timing, for example, can cause major differences between campaigns, so you should launch both versions concurrently, rather than one at a time.

- Be patient – make sure that you run the test for an appropriate amount of time. For example, when testing two different offers, you may see an initial big uptake and then quick drop off on the first, while the second is more of a slow burn… you will need to to see the average numbers over time to know which has ultimately proven more successful. This calculator may be helpful when deciding how long you will need to run a test.

- Keep at it and don’t be put off by modest results. A-B testing takes some practice (you will get better at the hypothesis stage over time) and not all changes will result in impressive 50% increases. If something only makes a 5% difference to your conversion rate, go ahead and implement it. Small tweaks here and there can contribute to an overall gain.

- Try collecting some qualitative data (customer comments) in addition to the quantitative (numbers). For example, you might add an exit survey that grabs customer opinions before they scurry away (“Leaving so soon? Tell us what we can do better”). These insights can help you understand key areas for improvement and future testing.

If you torture data long enough it will confess to anything

One note of caution: when dealing with statistics and data, you should remain objective. You may have personal opinions about which version of a campaign or web page is best, but you must put your own preferences aside. Otherwise you may find yourself manipulating the data to support your own bias, rendering the entire test invalid (and kind of pointless).

And the winner is…

With the data and tools at your disposal (plus a little practice) you can become a split testing machine. Of course, the tools have their limitations – they can’t do the thinking for you. Numbers won’t spill their secrets without the benefit of your expertise and customer insight. Testing is something you could and should be doing almost all the time. It’s low-cost and, while your results probably won’t break the internet, the incremental insights you will gain can really add up to a better ROI on your marketing spend. So, whether it’s A or B that takes out first place, your business will be the one winning every time.